- Blog

- Blog

- How do you create a hyperlink in word

- Update microsoft word

- Onenote apple pencil printing

- Hidden markov model matlab activity recognition source code

- Microsoft lync 2013 download free

- Autocad 2017 download with crack 32 bit

- Free download adobe reader for windows 10

- Plague inc full version free download for pc

- Apa format example title page 6th edition

- 802-11n driver download windows 7

- Program urc remote

- Crane song phoenix 1 for pto tools 10 hd

- James rollins books in order sigma

- Mad father game developer

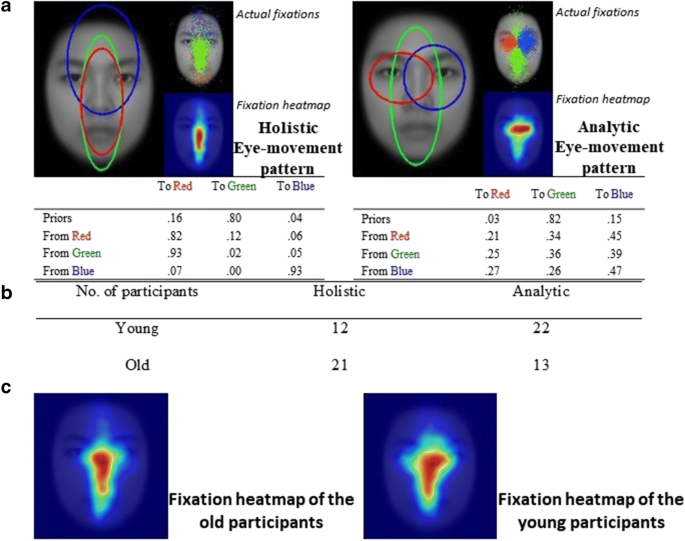

We use vision to guide our interactions with the world, but we cannot process all the visual information that our surroundings provide.

#HIDDEN MARKOV MODEL MATLAB ACTIVITY RECOGNITION SOURCE CODE LICENSE#

We release SMAC with HMM, a Matlab toolbox freely available to the community under an open-source license agreement. This synergistic approach between behavior and machine learning will open new avenues for simple quantification of gazing behavior. HMMs allow to integrate bottom-up, top-down, and oculomotor influences into a single model of gaze behavior. We achieve an average 81.2% correct classification rate (chance = 50%). Secondly, we use eye positions recorded while viewing 15 conversational videos, and infer a stimulus-related characteristic: the presence or absence of original soundtrack. We show that correct classification rates positively correlate with the number of salient regions present in the stimuli. We achieve an average of 55.9% correct classification rate (chance = 33%). Firstly, we use fixations recorded while viewing 800 static natural scene images, and infer an observer-related characteristic: the task at hand. We test our approach on two very different datasets. HMMs encapsulate the dynamic and individualistic dimensions of gaze behavior, allowing DA to capture systematic patterns diagnostic of a given class of observers and/or stimuli. This method relies on variational hidden Markov models (HMMs) and discriminant analysis (DA). Here, we provide a turnkey method for scanpath modeling and classification.

However, eye movements are complex signals and many of these studies rely on limited gaze descriptors and bespoke datasets.

Previous studies showed that scanpath, i.e., the sequence of eye movements made by an observer exploring a visual stimulus, can be used to infer observer-related (e.g., task at hand) and stimuli-related (e.g., image semantic category) information. How people look at visual information reveals fundamental information about them their interests and their states of mind.